Over the past year, we have been presented with a unique set of challenges. Living, and working, from home has been a challenge for us all, but it has most effectively stunted research projects. However, this was the perfect opportunity to test a machine meant for such a scenario. Farmbot gives us a sneak peek into the future of farming; fully automated and sustainable. These are imperative steps towards increasing the availability of fresh produce, cutting the effects of climate change, plastic packaging, pesticide use, and carbon emissions that continue to pollute the earth and the food we eat. Even though setting up FarmBot itself proved an arduous task, the final result provides a sustainable farming method allowing for the automated data collection and maintenance of plants.

Over the past year, we have been presented with a unique set of challenges. Living, and working, from home has been a challenge for us all, but it has most effectively stunted research projects. However, this was the perfect opportunity to test a machine meant for such a scenario. Farmbot gives us a sneak peek into the future of farming; fully automated and sustainable. These are imperative steps towards increasing the availability of fresh produce, cutting the effects of climate change, plastic packaging, pesticide use, and carbon emissions that continue to pollute the earth and the food we eat. Even though setting up FarmBot itself proved an arduous task, the final result provides a sustainable farming method allowing for the automated data collection and maintenance of plants.

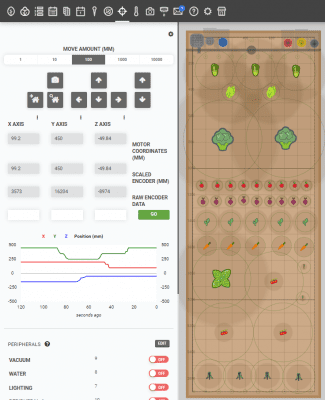

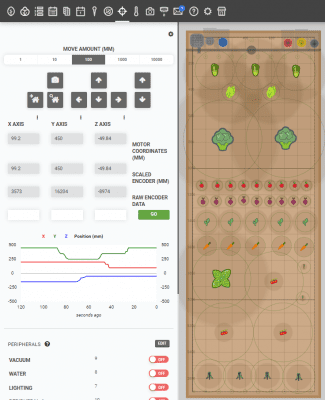

FarmBot is an autonomous open-source computer numerical control (CNC) farming robot that prioritizes sustainability and optimizes modern farming techniques. Using computer numerical control, FarmBot can accurately and repeatedly conduct experiments with no human input and therefore, very little error. We can write sequences, plan regimens and events to collect data 24/7 in addition to monitoring the system remotely. This allows us to plan as many plants, crops, inputs, and operations as needed. Reducing cost and increasing sustainable farming is a priority of FarmBot.

The FarmBot Genesis features a gantry mounted on tracks attached to the sides of a raised garden bed. The tracks create a great level of precision because the garden bed is represented as a grid in which plant locations and tools can have specific coordinates, therefore allowing endless customization of your garden. The gantry bridges both sides of the track and uses a belt and pulley drive system and V-wheels to move along the X-axis (the tracks) and the Y-axis (the gantry). The cross-slide that controls the Y-axis also utilizes a leadscrew to move the Z-axis extrusion, allowing for up and down movement as well.

Movement is powered by four NEMA 17 stepper motors including rotary encoders to monitor relative motion. The rotary encoders are monitored by a dedicated processor in the custom Farmduino electronics board, which utilizes a Raspberry Pi 3 as the “brain”. The Farmduino v1.5 system has several useful features including built-in stall detection for the motors. Stainless steel and aluminum hardware makes the machine resistant to corrosion, making this system safe for long-term use outdoors.

The FarmBot Genesis model also includes several tools made of UV-resistant ABS plastic that are interchanged using the Universal Tool Mount (UTM) on the Z-axis extrusion. The UTM utilizes 12 electrical connections, three liquid/gas lines, and magnetic coupling to mount and change tools with ease, allowing for automation at nearly every step of the planting and growing process. Sowing seeds without any human intervention is possible with the vacuum-powered seed injector tool, which is compatible with three different-sized needles to accommodate seeds of varying sizes. Once planting has been completed, regimens can be set up to water plants on a schedule using the watering nozzle. The attachment is coupled with a solenoid valve to control the flow of water, ensuring that each plant receives as much moisture as they need. The soil sensor tool takes the automation of watering a step further by detecting the moisture of the soil and using the collected data to modify the amount of water dispensed as needed. A customizable weeding tool uses spikes to push young weeds into the soil before they become an issue. FarmBot uses a built-in waterproof camera to detect weeds and take photos of plants to track growth. All of these tools and features create a completely customizable farming experience without the worry of human error.

FarmBot operates using a 100% open-source operating system and web app. In the web app, we can easily control, configure, create customizable sequences and routines all with a drag-and-drop farm designer and block code format. From here we are able to receive all information regarding the positioning, tools, and plants within the garden bed. FarmBot’s Raspberry Pi uses FarmBot OS to communicate and stay synchronized with the web app allowing it to download and execute scheduled events, be controlled in real-time, and upload logs and sensor data.

We faced many ups and downs during the hardware and software phases of FarmBot. From vague reference docs, manufacturing defects, hardware failures, and network security problems, this was an in-depth and at times very frustrating project. However, we didn’t want an easy project. The challenges we faced when building FarmBot, as annoying it was to debug at the time, helped us gain a great understanding of how this machine works. Through all the blood, sweat, and tears (literally), we learned more from this project than we ever could have imagined. From woodworking to circuity to programming and botany, we tackled a wide range of issues. But that’s what made this project so worth it. Many times we had to resort to out-of-the-box thinking to resolve issues with some of the limited components we had. Working as a team also allowed us to bounce ideas off one another while each bringing our own unique talents to the team. Nicolas has a background in Computer Science which helped with programming FarmBot as well as resolving software and networking issues that occurred. Hannah’s major is Biological Sciences and she has experience with gardening, which helped in the plant science aspect of the project on top of overall planning and construction. As an electrical engineering major, Nicholas helped with the building process, wiring and his input was critical in resolving issues of such an advanced electromechanical system. While we recognize FarmBot’s shortcomings, it was an incredible learning experience and an imperative building block towards a more sustainable and eco-friendly future.

Moving forward, we plan on modifying FarmBot to include a webcam, rain barrel, and solar panels. A webcam will give us a live feed of the FarmBot to allow live remote monitoring as well as enable us to take photos for timelapse photography. Rain barrels can be used to collect rainwater which can be recycled and act as FarmBots water source, further increasing its sustainability. Solar panels will provide a dedicated, off-grid solar energy system helping further reduce the cost and carbon emissions associated with running FarmBot. We also currently have an MIS capstone team developing ways to pull real-time data for future analysis and dataset usage in other academic settings.

Be on the lookout for future FarmBot updates and be free to reach out to opiminnovate@uconn.edu for more information.

By: Nicholas Satta, Hannah Meikle, Nicolas Michel

this project grow. In the past few months, Jon Moore has been meeting with other groups at the the University to coordinate distribution and hopefully, to enable the study and documentation of the beneficial effects on mental health that can be achieved by caring for plants. Colleen Atkinson and Karen McComb from Student Health and Wellness have been involved, along with Melissa Bray and Emily Winter from the Ed Psych department. Amy Crim from Residential Life Education has offered ideas on how we might distribute the plants that are currently being grown.

this project grow. In the past few months, Jon Moore has been meeting with other groups at the the University to coordinate distribution and hopefully, to enable the study and documentation of the beneficial effects on mental health that can be achieved by caring for plants. Colleen Atkinson and Karen McComb from Student Health and Wellness have been involved, along with Melissa Bray and Emily Winter from the Ed Psych department. Amy Crim from Residential Life Education has offered ideas on how we might distribute the plants that are currently being grown.

Over the past year, we have been presented with a unique set of challenges. Living, and working, from home has been a challenge for us all, but it has most effectively stunted research projects. However, this was the perfect opportunity to test a machine meant for such a scenario. Farmbot gives us a sneak peek into the future of farming; fully automated and sustainable. These are imperative steps towards increasing the availability of fresh produce, cutting the effects of climate change, plastic packaging, pesticide use, and carbon emissions that continue to pollute the earth and the food we eat. Even though setting up FarmBot itself proved an arduous task, the final result provides a sustainable farming method allowing for the automated data collection and maintenance of plants.

Over the past year, we have been presented with a unique set of challenges. Living, and working, from home has been a challenge for us all, but it has most effectively stunted research projects. However, this was the perfect opportunity to test a machine meant for such a scenario. Farmbot gives us a sneak peek into the future of farming; fully automated and sustainable. These are imperative steps towards increasing the availability of fresh produce, cutting the effects of climate change, plastic packaging, pesticide use, and carbon emissions that continue to pollute the earth and the food we eat. Even though setting up FarmBot itself proved an arduous task, the final result provides a sustainable farming method allowing for the automated data collection and maintenance of plants.

Wearable biometric technology is currently revolutionizing healthcare and consumer electronics. Internet-enabled pacemakers, smart watches with heart rate sensors and all manner of medical equipment now merge health with convenience. This is all a step in the right direction, but not quite the end goal. In fact, it seems that we’re past the point where technology can fix all the problems we’ve created. If this is the case then, at the very least, I think we can have a bit of fun while we’re still alive. Let us not mince words: We’re headed straight for a global environmental collapse, and I propose we go out in style.

Wearable biometric technology is currently revolutionizing healthcare and consumer electronics. Internet-enabled pacemakers, smart watches with heart rate sensors and all manner of medical equipment now merge health with convenience. This is all a step in the right direction, but not quite the end goal. In fact, it seems that we’re past the point where technology can fix all the problems we’ve created. If this is the case then, at the very least, I think we can have a bit of fun while we’re still alive. Let us not mince words: We’re headed straight for a global environmental collapse, and I propose we go out in style. The design of the device is relatively simple. I used the FLORA (a wearable, Arduino-compatible microcontroller) as the brain of the device, a Pulse Sensor to read heart rate data from the user’s ear lobe and several NeoPixel LED sequins for output. The speed at which the LEDs blink is determined by heart rate and the color is determined by heart rate variance. In the process of designing this, I learned how to work with conductive thread, how to program addressable LEDs and how to read and interpret heart rate and heart variance data from a sensor. As these are all important skills for wearable electronics prototyping, a similar project may be viable as an introduction to wearable product design. Going forward, I would like to explore other options for visualizing output from biometric sensors. I may work on outputting data from such a device to a computer via Bluetooth, incorporate additional sensors into the design and log the data to be used for analysis.

The design of the device is relatively simple. I used the FLORA (a wearable, Arduino-compatible microcontroller) as the brain of the device, a Pulse Sensor to read heart rate data from the user’s ear lobe and several NeoPixel LED sequins for output. The speed at which the LEDs blink is determined by heart rate and the color is determined by heart rate variance. In the process of designing this, I learned how to work with conductive thread, how to program addressable LEDs and how to read and interpret heart rate and heart variance data from a sensor. As these are all important skills for wearable electronics prototyping, a similar project may be viable as an introduction to wearable product design. Going forward, I would like to explore other options for visualizing output from biometric sensors. I may work on outputting data from such a device to a computer via Bluetooth, incorporate additional sensors into the design and log the data to be used for analysis. I was excited to find out that a Writing Center tutor was kind enough to donate a Keurig to the office, putting lifegiving caffeine in the hands of tutors without the cost of running down to Bookworms Cafe. Alas, it was disturbing to see that the K-Cups used by the machine were being stored in a small basket. Now, I’m not the Queen of England or anything, but I have my limits. The toll on my mental health taken by watching the cups lazily thrown into a pile in the woven container was enough to force me to take action. With less than half an hour of active work, I was able to turn the Writing Center logo, a stylized “W”, into a three-dimensional model complete with holes designed to hold K-Cups.

I was excited to find out that a Writing Center tutor was kind enough to donate a Keurig to the office, putting lifegiving caffeine in the hands of tutors without the cost of running down to Bookworms Cafe. Alas, it was disturbing to see that the K-Cups used by the machine were being stored in a small basket. Now, I’m not the Queen of England or anything, but I have my limits. The toll on my mental health taken by watching the cups lazily thrown into a pile in the woven container was enough to force me to take action. With less than half an hour of active work, I was able to turn the Writing Center logo, a stylized “W”, into a three-dimensional model complete with holes designed to hold K-Cups. But there’s another reason I decided to turn my strange idea into a reality: I wanted to highlight the range of resources offered on campus to UConn students. The OPIM Innovate space and the Writing

But there’s another reason I decided to turn my strange idea into a reality: I wanted to highlight the range of resources offered on campus to UConn students. The OPIM Innovate space and the Writing Artificial Intelligence is an interesting field that has become more and more integral to our daily lives. Its applications can be seen from facial recognition, recommendation systems, and automated client services. Such tasks make life a lot simpler, but are quite complex in and of themselves. Nonetheless, these tasks rely on machine learning, which is how computers develop ways to recognize patterns. These patterns require loads of data however so that computers can be accurate. Luckily in this day and age, there is a variety of data to train from and a variety of problems to tackle.

Artificial Intelligence is an interesting field that has become more and more integral to our daily lives. Its applications can be seen from facial recognition, recommendation systems, and automated client services. Such tasks make life a lot simpler, but are quite complex in and of themselves. Nonetheless, these tasks rely on machine learning, which is how computers develop ways to recognize patterns. These patterns require loads of data however so that computers can be accurate. Luckily in this day and age, there is a variety of data to train from and a variety of problems to tackle. When I first started working in the Innovate Lab, I saw the LCD plate in one of the cabinet drawers and wanted to know how it worked. I was fascinated with its potential an

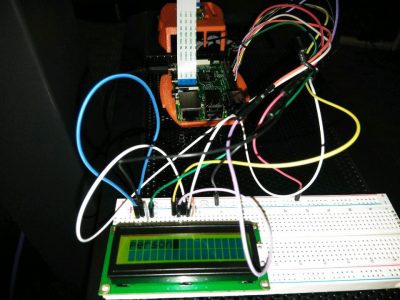

When I first started working in the Innovate Lab, I saw the LCD plate in one of the cabinet drawers and wanted to know how it worked. I was fascinated with its potential an I have been using CAD to make different models and designs since I was in high school. It’s so satisfying to make different parts in a program that you can then bring to life with 3D Printing. In the OPIM Innovation Space, several 3D printers have some really special capabilities and I wanted to put my skills as a designer, and the abilities of the printer, to the test.

I have been using CAD to make different models and designs since I was in high school. It’s so satisfying to make different parts in a program that you can then bring to life with 3D Printing. In the OPIM Innovation Space, several 3D printers have some really special capabilities and I wanted to put my skills as a designer, and the abilities of the printer, to the test.

Nowadays programs made by companies like Apple and Facebook are capable of making software that can unlock your phone or tag you in a photo automatically using facial recognition. Facial recognition first attracted me because of the incredible ability it had to translate a few lines of code into a recognition that mimics the human eye. How can this technology be helpful in the world of business? My first thought was in cyber security. Although I merely had one semester, I wanted to be able to use video to recognize faces. My first attempt used a raspberry pi as the central controller. Raspberry pis are portable, affordable, and familiar because they are used throughout the OPIM Innovation Space. There is also a camera attachment which I thought made it perfect for my project. After realizing that the program I attempted to develop used too much memory, I moved into using Python, a programming language, on my own laptop.

Nowadays programs made by companies like Apple and Facebook are capable of making software that can unlock your phone or tag you in a photo automatically using facial recognition. Facial recognition first attracted me because of the incredible ability it had to translate a few lines of code into a recognition that mimics the human eye. How can this technology be helpful in the world of business? My first thought was in cyber security. Although I merely had one semester, I wanted to be able to use video to recognize faces. My first attempt used a raspberry pi as the central controller. Raspberry pis are portable, affordable, and familiar because they are used throughout the OPIM Innovation Space. There is also a camera attachment which I thought made it perfect for my project. After realizing that the program I attempted to develop used too much memory, I moved into using Python, a programming language, on my own laptop.

Before the introduction of Apple’s “Siri” in 2010, Artificial Intelligence voice assistants were no more than science fiction. Fast forward to today, and you will find them everywhere from in your phone helping you navigate your contacts and calendar, to in your home helping you around the house. Each smart assistant has its pros and cons, and everyone has their favorite assistant. Over the last few years I have really enjoyed working with Amazon’s Alexa smart assistant. I began working with Alexa during my summer internship at Travelers in 2016. I attended a “build night” after work where we learned how to start developing with Amazon Web Services and the Alexa platform. Since then, I’ve developed six different skills and received Amazon hoodies, t-shirts, and Echo Dots for my work.

Before the introduction of Apple’s “Siri” in 2010, Artificial Intelligence voice assistants were no more than science fiction. Fast forward to today, and you will find them everywhere from in your phone helping you navigate your contacts and calendar, to in your home helping you around the house. Each smart assistant has its pros and cons, and everyone has their favorite assistant. Over the last few years I have really enjoyed working with Amazon’s Alexa smart assistant. I began working with Alexa during my summer internship at Travelers in 2016. I attended a “build night” after work where we learned how to start developing with Amazon Web Services and the Alexa platform. Since then, I’ve developed six different skills and received Amazon hoodies, t-shirts, and Echo Dots for my work.